Docker EOF问题排查

一、前言

问题排查过程,源码部分均由我的开发同事排查和记录;在征得其同意后,由我发表在此。

二、问题

某天接到客户反馈,pod的事件中出现大量的 warning event: Readiness probe failed: OCI runtime exec failed: exec failed: EOF: unknown。但不影响客户访问该服务。

三、环境

特别说明:客户在负责运行业务的k8s节点上坚持开启了cpu-manager

四、排查

1、接到客户反馈后,检查该pod所在节点的kubelet日志,如下:

I0507 03:43:28.310630 57003 prober.go:112] Readiness probe for "adsfadofadfabdfhaodsfa(d1aab5f0-

ae8f-11eb-a151-080027049c65):c0" failed (failure): OCI runtime exec failed: exec failed: EOF: unknown

I0507 07:08:49.834093 57003 prober.go:112] Readiness probe for "adsfadofadfabdfhaodsfa(a89a158e-

ae8f-11eb-a151-080027049c65):c0" failed (failure): OCI runtime exec failed: exec failed: unexpected EOF: unknown

I0507 10:06:58.307881 57003 prober.go:112] Readiness probe for "adsfadofadfabdfhaodsfa(d1aab5f0-

ae8f-11eb-a151-080027049c65):c0" failed (failure): OCI runtime exec failed: exec failed: EOF: unknown

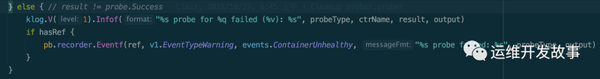

probe的错误类型为failure,对应代码如下:

2、再查看docker日志,如下:

time="2021-05-06T16:51:40.009989451+08:00" level=error msg="stream copy error: reading from a closed fifo"

time="2021-05-06T16:51:40.010054596+08:00" level=error msg="stream copy error: reading from a closed fifo"

time="2021-05-06T16:51:40.170676532+08:00" level=error msg="Error running exec 8e34e8b910694abe95a467b2936b37635fdabd2f7b7c464d

fef952fa5732aa4e in container: OCI runtime exec failed: exec failed: EOF: unknown"

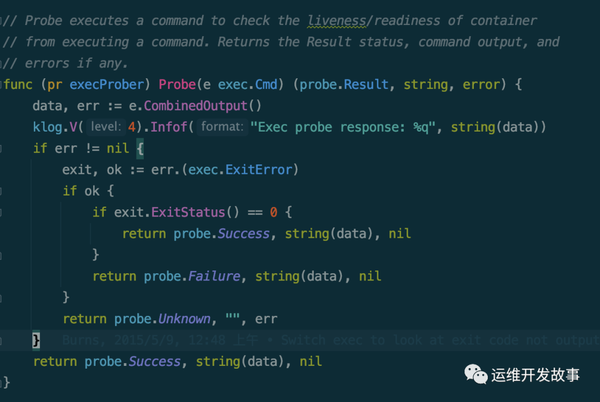

虽然从Docker日志中显示是 stream copy error,但实际上是底层的 runc 返回了 EOF,导致返回了 error。3、因为日志中显示 probe 类型为 Failure,因此 e.CombinedOutPut() 的 err != nil,并且 ExitStatus 不为 0,data 的值为 OCI runtime exec failed: exec failed: unexpected EOF: unknown,最终会调用到 RunInContainer 方法

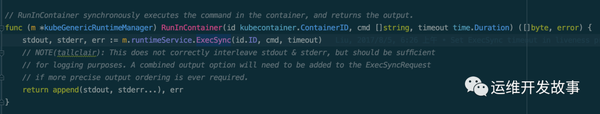

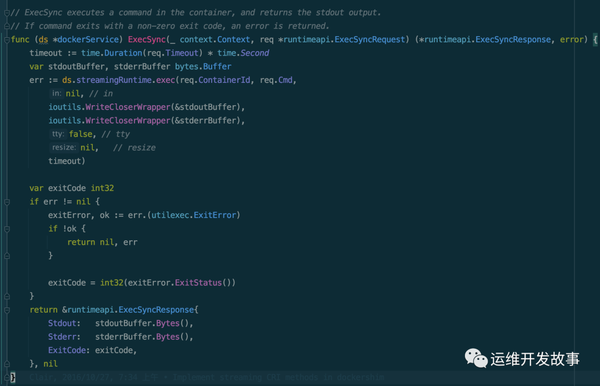

ExecSync 是通过 GRPC 调用了 dockershim 的 ExecSync

dockershim 最终调用到 ExecInContainer 方法,并且该方法的返回了 exitcode 不为 0 的 error。

func (*NativeExecHandler) ExecInContainer(client libdocker.Interface, container *dockertypes.ContainerJSON, cmd []string, stdin io.Reader, stdout, stderr io.WriteCloser, tty bool, resize <-chan remotecommand.TerminalSize, timeout time.Duration) error {

execObj, err := client.CreateExec(container.ID, createOpts)

startOpts := dockertypes.ExecStartCheck{Detach: false, Tty: tty}

streamOpts := libdocker.StreamOptions{

InputStream: stdin,

OutputStream: stdout,

ErrorStream: stderr,

RawTerminal: tty,

ExecStarted: execStarted,

err = client.StartExec(execObj.ID, startOpts, streamOpts)

if err != nil {

return err

ticker := time.NewTicker(2 * time.Second)

defer ticker.Stop()

count := 0

for {

inspect, err2 := client.InspectExec(execObj.ID)

if err2 != nil {

return err2

if !inspect.Running {

if inspect.ExitCode != 0 {

err = &dockerExitError{inspect}

break

count++

if count == 5 {

klog.Errorf("Exec session %s in container %s terminated but process still running!", execObj.ID, container.ID)

break

<-ticker.C

return err

ExecInContainer 做了以下几件事:

-

调用 CreateExec 创建 ExecID

-

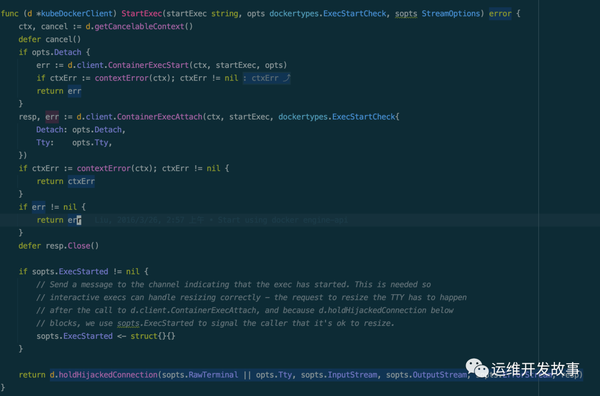

调用 StartExec 执行 exec,并通过 holdHijackedConnection 来重定向输入输出。将 inputStream 写入到 connection,并将 response stream 重定向到 stdout,stderr。

-

调用 InspectExec 获取本次 exec 的运行状态和 exitcode

那么日志中打印的报错就是 response stream 传递过来的字符流。也就是说,dockerd 的 response 中包含了错误值。

此时去 docker 代码中查找原因,ExecStart 会调用到 dockerd 的以下代码:

根据上面 docker 的日志,err 的错误信息为:OCI runtime exec failed: exec failed: EOF: unknown。也就是说 ContainerExecStart 返回了错误。ContainerExecStart 会调用到 containerd.Exec,也就是 dockerd 和 containerd 之间进行通信

// docker/libcontainerd/client_daemon.go

// Exec creates exec process.

// The containerd client calls Exec to register the exec config in the shim side.

// When the client calls Start, the shim will create stdin fifo if needs. But

// for the container main process, the stdin fifo will be created in Create not

// the Start call. stdinCloseSync channel should be closed after Start exec

// process.

func (c *client) Exec(ctx context.Context, containerID, processID string, spec *specs.Process, withStdin bool, attachStdio StdioCallback) (int, error) {

ctr := c.getContainer(containerID)

if ctr == nil {

return -1, errors.WithStack(newNotFoundError("no such container"))

t := ctr.getTask()

if t == nil {

return -1, errors.WithStack(newInvalidParameterError("container is not running"))

if p := ctr.getProcess(processID); p != nil {

return -1, errors.WithStack(newConflictError("id already in use"))

var (

p containerd.Process

rio cio.IO

err error

stdinCloseSync = make(chan struct{})

fifos := newFIFOSet(ctr.bundleDir, processID, withStdin, spec.Terminal)

defer func() {

if err != nil {

if rio != nil {

rio.Cancel()

rio.Close()

p, err = t.Exec(ctx, processID, spec, func(id string) (cio.IO, error) {

rio, err = c.createIO(fifos, containerID, processID, stdinCloseSync, attachStdio)

return rio, err

if err != nil {

close(stdinCloseSync)

return -1, wrapError(err)

ctr.addProcess(processID, p)

// Signal c.createIO that it can call CloseIO

// the stdin of exec process will be created after p.Start in containerd

defer close(stdinCloseSync)

if err = p.Start(ctx); err != nil {

// use new context for cleanup because old one may be cancelled by user, but leave a timeout to make sure

// we are not waiting forever if containerd is unresponsive or to work around fifo cancelling issues in

// older containerd-shim

ctx, cancel := context.WithTimeout(context.Background(), 45*time.Second)

defer cancel()

p.Delete(ctx)

ctr.deleteProcess(processID)

return -1, wrapError(err)

return int(p.Pid()), nil

这里 new 了一个 FIFOSet,而 reading from a closed fifo 仅出现在 fifo 被 close 掉时,仍然在读取的情况。即 f.Close() 发生在 f.Read() 前面。在外层可以看到

defer func() {

if err != nil {

if rio != nil {

rio.Cancel()

rio.Close() // 这里 Close 会导致 fifo close

p, err = t.Exec(ctx, processID, spec, func(id string) (cio.IO, error) {

rio, err = c.createIO(fifos, containerID, processID, stdinCloseSync, attachStdio)

return rio, err

if err != nil {

close(stdinCloseSync)

return -1, wrapError(err)

ctr.addProcess(processID, p)

// Signal c.createIO that it can call CloseIO

// the stdin of exec process will be created after p.Start in containerd

defer close(stdinCloseSync)

// p.Start 出错,会导致内部的 fifo 关闭,从而导致 reading from a closed fifo 的问题

if err = p.Start(ctx); err != nil {

// use new context for cleanup because old one may be cancelled by user, but leave a timeout to make sure

// we are not waiting forever if containerd is unresponsive or to work around fifo cancelling issues in

// older containerd-shim

ctx, cancel := context.WithTimeout(context.Background(), 45*time.Second)

defer cancel()

p.Delete(ctx)

ctr.deleteProcess(processID)

return -1, wrapError(err)

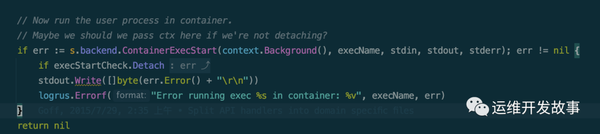

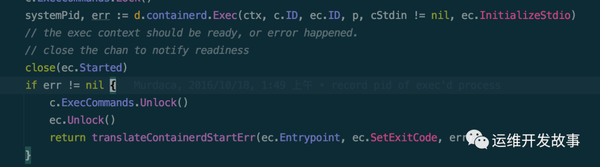

p.Start 调用到下面的代码,通过 GRPC 和 containerd 通信。

// github.com/containerd/containerd/task.go

func (t *task) Start(ctx context.Context) error {

r, err := t.client.TaskService().Start(ctx, &tasks.StartRequest{

ContainerID: t.id,

if err != nil {

t.io.Cancel()

t.io.Close()

return errdefs.FromGRPC(err)

t.pid = r.Pid

return nil

这个 GRPC 调用会到达 containerd 以下的代码:

func (e *execProcess) start(ctx context.Context) (err error) {

var (

socket *runc.Socket

pidfile = filepath.Join(e.path, fmt.Sprintf("%s.pid", e.id))

if e.stdio.Terminal {

if socket, err = runc.NewTempConsoleSocket(); err != nil {

return errors.Wrap(err, "failed to create runc console socket")

defer socket.Close()

} else if e.stdio.IsNull() {

if e.io, err = runc.NewNullIO(); err != nil {

return errors.Wrap(err, "creating new NULL IO")

} else {

if e.io, err = runc.NewPipeIO(e.parent.IoUID, e.parent.IoGID, withConditionalIO(e.stdio)); err != nil {

return errors.Wrap(err, "failed to create runc io pipes")

opts := &runc.ExecOpts{

PidFile: pidfile,

IO: e.io,

Detach: true,

if socket != nil {

opts.ConsoleSocket = socket

// err 返回了 exec failed: EOF: unknown

// 这里的 runtime 就是 runc 的二进制文件执行命令

if err := e.parent.runtime.Exec(ctx, e.parent.id, e.spec, opts); err != nil {

close(e.waitBlock)

return e.parent.runtimeError(err, "OCI runtime exec failed")

Exec 的代码如下:

// Exec executres and additional process inside the container based on a full

// OCI Process specification

func (r *Runc) Exec(context context.Context, id string, spec specs.Process, opts *ExecOpts) error {

f, err := ioutil.TempFile(os.Getenv("XDG_RUNTIME_DIR"), "runc-process")

if err != nil {

return err

defer os.Remove(f.Name())

err = json.NewEncoder(f).Encode(spec)

f.Close()

if err != nil {

return err

args := []string{"exec", "--process", f.Name()}

if opts != nil {

oargs, err := opts.args()

if err != nil {

return err

args = append(args, oargs...)

cmd := r.command(context, append(args, id)...)

if opts != nil && opts.IO != nil {

opts.Set(cmd)

if cmd.Stdout == nil && cmd.Stderr == nil {

data, err := cmdOutput(cmd, true)

if err != nil {

return fmt.Errorf("%s: %s", err, data)

return nil

ec, err := Monitor.Start(cmd)

if err != nil {

return err

if opts != nil && opts.IO != nil {

if c, ok := opts.IO.(StartCloser); ok {

if err := c.CloseAfterStart(); err != nil {

return err

status, err := Monitor.Wait(cmd, ec)

if err == nil && status != 0 {

err = fmt.Errorf("%s did not terminate sucessfully", cmd.Args[0])

return err

因此是 runc 在运行后输出了 exec failed: EOF: unknown 这个错误。

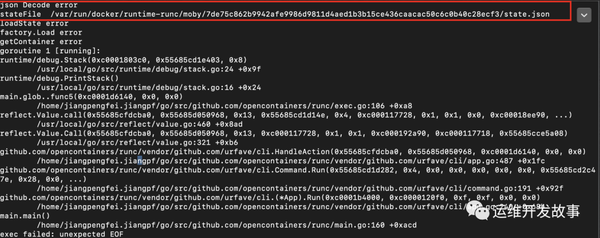

将 runc 指令循环执行,可少量复现。经过排查,发现 runc exec 在运行期间会读取 container 的 state.json,并使用 json decode 时出现异常。

此时联想到开启 kubelet cpu-manager 后,会 update container,也就是更新这个 state.json 文件。导致 runc 读到了部分 cpu-manager 更新的内容。从而导致 json decode 失败。此时排查 runc EOF 和 kubelet cpu-manager update container(默认每 10s 更新一次) 的时间,发现时间点刚好吻合,验证猜想。

查看 runc 是否有修复,发现了这个 pr: https:// github.com/opencontaine rs/runc/pull/2467 。 修复思路是将 saveState 变成原子操作,这样就不会出现读取 state.json 时,读到部分写入的内容,导致 unexpected EOF (或 EOF)的问题

// 原来的

func (c *linuxContainer) saveState(s *State) error {

f, err := os.Create(filepath.Join(c.root, stateFilename))

if err != nil {

return err

defer f.Close()

return utils.WriteJSON(f, s)

// 修复后的

func (c *linuxContainer) saveState(s *State) (retErr error) {

tmpFile, err := ioutil.TempFile(c.root, "state-")

if err != nil {

return err

defer func() {

if retErr != nil {

tmpFile.Close()

os.Remove(tmpFile.Name())

err = utils.WriteJSON(tmpFile, s)

if err != nil {

return err

err = tmpFile.Close()

if err != nil {

return err