android camera采集、H264编码与Rtmp推流

MediaPlus是基于FFmpeg从零开发的android多媒体组件,主要包括:采集,编码,同步,推流,滤镜及直播及短视频比较通用的功能等,后续功能的新增都会有相应文档更新,感谢关注。

- android相机的视频采集格式比较多 ,如:NV21,NV12,YV12等。他们之间的区别就是U,V排列顺序不一致,具体YUV相关内容可以看看其他详细的文档,如:[ 总结]FFMPEG视音频编解码零基础学习方法 。

需要了解的就是:YUV采样,数据分布及空间大小计算。 YUV采样:

YUV420P YUV排序如下图:

NV12,NV21,YV12,I420都属于YUV420,但是YUV420 又分为YUV420P,YUV420SP,P与SP区别就是,前者YUV420P UV顺序存储,而YUV420SP则是UV交错存储,这是最大的区别,具体的yuv排序就是这样的: I420: YYYYYYYY UU VV ->YUV420P YV12: YYYYYYYY VV UU ->YUV420P NV12: YYYYYYYY UVUV ->YUV420SP NV21: YYYYYYYY VUVU ->YUV420SP

那么H264编码,为什么需要把android 相机采集的NV21数据转换成YUV420P? 刚开始对这些颜色格式也很模糊,后来找到了真理:因为H264编码必须要用 I420, 所以这里必须要处理色彩格式转换。 MediaPlus采集视频数据为NV21格式,以下描述如何获取android camera采集的每一帧数据,并处理色彩格式转换,代码如下:

- 获取相机采集数据:

mCamera = Camera.open(Camera.CameraInfo.CAMERA_FACING_BACK);

mParams = mCamera.getParameters();

setCameraDisplayOrientation(this, Camera.CameraInfo.CAMERA_FACING_BACK, mCamera);

mParams.setPreviewSize(SRC_FRAME_WIDTH, SRC_FRAME_HEIGHT);

mParams.setPreviewFormat(ImageFormat.NV21); //preview format:NV21

mParams.setFocusMode(Camera.Parameters.FOCUS_MODE_CONTINUOUS_VIDEO);

m_camera.setDisplayOrientation(90);

mCamera.setParameters(mParams); // setting camera parameters

m_camera.addCallbackBuffer(m_nv21);

m_camera.setPreviewCallbackWithBuffer(this);

m_camera.startPreview();

@Override

public void onPreviewFrame(byte[] data, Camera camera) {

// TODO Auto-generated method stub

//data这里就是获取到的NV21数据

m_camera.addCallbackBuffer(m_nv21);//这里要添加一次缓冲,否则onPreviewFrame可能不会再被回调

}因为NV21数据的所需空间大小(字节)=宽 x 高 x 3 / 2 (y=WxH,u=WxH/4,v=WxH/4);所以我们需要建立一个byte数组,作为采集视频数据的缓冲区. MediaPlus>>app.mobile.nativeapp.com.libmedia.core.streamer.RtmpPushStreamer 类主要采集音视频数据,并交由底层处理;有两个线程分别用于处理音视频,AudioThread 、VideoThread.

- 首先看下VideoThread

/**

* 视频采集线程

class VideoThread extends Thread {

public volatile boolean m_bExit = false;

byte[] m_nv21Data = new byte[mVideoSizeConfig.srcFrameWidth

* mVideoSizeConfig.srcFrameHeight * 3 / 2];

byte[] m_I420Data = new byte[mVideoSizeConfig.srcFrameWidth

* mVideoSizeConfig.srcFrameHeight * 3 / 2];

byte[] m_RotateData = new byte[mVideoSizeConfig.srcFrameWidth

* mVideoSizeConfig.srcFrameHeight * 3 / 2];

byte[] m_MirrorData = new byte[mVideoSizeConfig.srcFrameWidth

* mVideoSizeConfig.srcFrameHeight * 3 / 2];

@Override

public void run() {

// TODO Auto-generated method stub

super.run();

VideoCaptureInterface.GetFrameDataReturn ret;

while (!m_bExit) {

try {

Thread.sleep(1, 10);

if (m_bExit) {

break;

} catch (InterruptedException e) {

e.printStackTrace();

ret = mVideoCapture.GetFrameData(m_nv21Data,

m_nv21Data.length);

if (ret == VideoCaptureInterface.GetFrameDataReturn.RET_SUCCESS) {

frameCount++;

LibJniVideoProcess.NV21TOI420(mVideoSizeConfig.srcFrameWidth, mVideoSizeConfig.srcFrameHeight, m_nv21Data, m_I420Data);

if (curCameraType == VideoCaptureInterface.CameraDeviceType.CAMERA_FACING_FRONT) {

LibJniVideoProcess.MirrorI420(mVideoSizeConfig.srcFrameWidth, mVideoSizeConfig.srcFrameHeight, m_I420Data, m_MirrorData);

LibJniVideoProcess.RotateI420(mVideoSizeConfig.srcFrameWidth, mVideoSizeConfig.srcFrameHeight, m_MirrorData, m_RotateData, 90);

} else if (curCameraType == VideoCaptureInterface.CameraDeviceType.CAMERA_FACING_BACK) {

LibJniVideoProcess.RotateI420(mVideoSizeConfig.srcFrameWidth, mVideoSizeConfig.srcFrameHeight, m_I420Data, m_RotateData, 90);

encodeVideo(m_RotateData, m_RotateData.length);

public void stopThread() {

m_bExit = true;

}为什么要旋转? 实际上android camera采集的时候,不管手机是纵向还是横向,视频都是横向进行采集,这样当手机纵向的时候,就会有角度差异;前置需要旋转270°,后置旋转90°,这样就能保证采集到的图像和手机方向是一致的。

处理镜像的原因是因为前置相机采集的图像默认就是镜像的,再做一次镜像,将图像还原回去。 MediaPlus中,使用libyuv来处理转换、旋转、镜像等。 MediaPlus>>app.mobile.nativeapp.com.libmedia.core.jni.LibJniVideoProcess 提供应用层接口

package app.mobile.nativeapp.com.libmedia.core.jni;

import app.mobile.nativeapp.com.libmedia.core.config.MediaNativeInit;

* 色彩空间处理

* Created by android on 11/16/17.

public class LibJniVideoProcess {

static {

MediaNativeInit.InitMedia();

* NV21转换I420

* @param in_width 输入宽度

* @param in_height 输入高度

* @param srcData 源数据

* @param dstData 目标数据

* @return

public static native int NV21TOI420(int in_width, int in_height,

byte[] srcData,

byte[] dstData);

* 镜像I420

* @param in_width 输入宽度

* @param in_height 输入高度

* @param srcData 源数据

* @param dstData 目标数据

* @return

public static native int MirrorI420(int in_width, int in_height,

byte[] srcData,

byte[] dstData);

* 指定角度旋转I420

* @param in_width 输入宽度

* @param in_height 输入高度

* @param srcData 源数据

* @param dstData 目标数据

public static native int RotateI420(int in_width, int in_height,

byte[] srcData,

byte[] dstData, int rotationValue);

}libmedia/src/cpp/jni/jni_Video_Process.cpp 图像处理JNI层,libyuv比较强大,包括了所有YUV的转换等其他处理,简单描述下函数参数,如:

LIBYUV_API

int NV21ToI420(const uint8* src_y, int src_stride_y,

const uint8* src_vu, int src_stride_vu,

uint8* dst_y, int dst_stride_y,

uint8* dst_u, int dst_stride_u,

uint8* dst_v, int dst_stride_v,

int width, int height);

- src_y :y分量存储空间

- src_stride_y :y分量宽度数据长度

- src_vu:uv分量存储空间

- src_stride_uv:uv分量宽度数据长度

- dst_y :目标y分量存储空间

- dst_u :目标u分量存储空间

- dst_v :目标v分量存储空间

- dst_stride_y:目标y分量宽度数据长度

- dst_stride_u:目标v分量宽度数据长度

- dst_stride_v:目标u分量宽度数据长度

- width: 视频宽

- height:视频高

- 假设,一个8(宽)x6(高)的图像,函数参数如下:

int width=8;

int height=6;

//源数据存储空间

uint8_t *srcNV21Data;

//目标存储空间

uint8_t *dstI420Data;

src_y=srcNV21Data;

src_uv=srcNV21Data + (widthxheight);

src_stride_y=width;

src_stride_uv=width/2;

dst_y=dstI420Data;

dst_u=dstI420Data+(widthxheight);

dst_v=dstI420Data+(widthxheightx5/4);

dst_stride_y=width;

dst_stride_u=width/2;

dst_stride_v=width/2;以下是调用libyuv完成图像转换、旋转、镜像的代码:

//

// Created by developer on 11/16/17.

#include "jni_Video_Process.h"

#ifdef __cplusplus

extern "C" {

#endif

JNIEXPORT jint JNICALL

Java_app_mobile_nativeapp_com_libmedia_core_jni_LibJniVideoProcess_NV21TOI420(JNIEnv *env,

class type,

jin in_width,

jin in_height,

jbyteArray srcData_,

jbyteArray dstData_) {

jbyte *srcData = env->GetByteArrayElements(srcData_, NULL);

jbyte *dstData = env->GetByteArrayElements(dstData_, NULL);

VideoProcess::NV21TOI420(in_width, in_height, (const uint8_t *) srcData,

(uint8_t *) dstData);

return 0;

JNIEXPORT jint JNICALL

Java_app_mobile_nativeapp_com_libmedia_core_jni_LibJniVideoProcess_MirrorI420(JNIEnv *env,

class type,

jin in_width,

jin in_height,

jbyteArray srcData_,

jbyteArray dstData_) {

jbyte *srcData = env->GetByteArrayElements(srcData_, NULL);

jbyte *dstData = env->GetByteArrayElements(dstData_, NULL);

VideoProcess::MirrorI420(in_width, in_height, (const uint8_t *) srcData,

(uint8_t *) dstData);

return 0;

JNIEXPORT jint JNICALL

Java_app_mobile_nativeapp_com_libmedia_core_jni_LibJniVideoProcess_RotateI420(JNIEnv *env,

class type,

jin in_width,

jin in_hegith,

jbyteArray srcData_,

jbyteArray dstData_,

jint rotationValue) {

jbyte *srcData = env->GetByteArrayElements(srcData_, NULL);

jbyte *dstData = env->GetByteArrayElements(dstData_, NULL);

return VideoProcess::RotateI420(in_width, in_hegith, (const uint8_t *) srcData,

(uint8_t *) dstData, rotationValue);

#ifdef __cplusplus

#endif

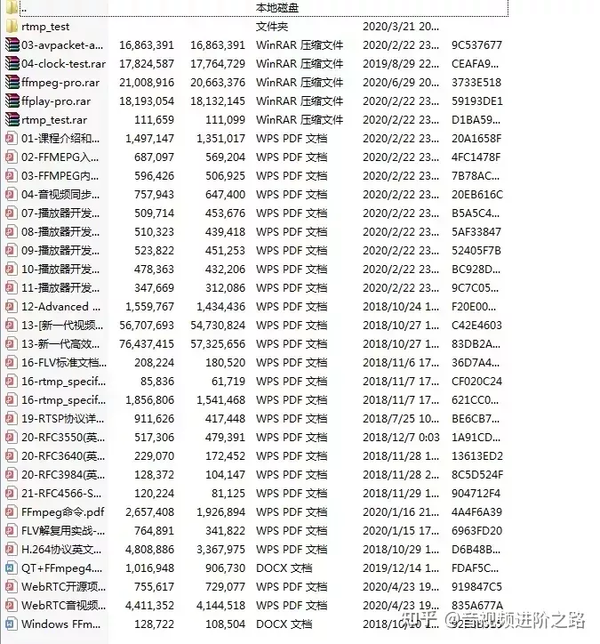

【学习地址】: FFmpeg/WebRTC/RTMP/NDK/Android音视频流媒体高级开发

【文章福利】:免费领取更多音视频学习资料包、大厂面试题、技术视频和学习路线图,资料包括(C/C++,Linux,FFmpeg webRTC rtmp hls rtsp ffplay srs 等等)有需要的可以点击 1079654574 加群领取哦~

以上代码完成NV21转换为I420等处理,接下来将数据传入底层,就可以使用FFmpeg进行H264编码了,下图是底层C++封装类图:

类图说明了,MediaEncoder依赖于MediaCapture,MediaPushStreamer依赖MediaEncoder的相互关系。VideoCapture接收视频数据缓存至videoCaptureframeQueue,AudioCapture接收音频数据缓存至audioCaptureframeQueue,这样RtmpPushStreamer就可以调用MediaEncoder完成音视频编码,并推流。

MediaPlus>>app.mobile.nativeapp.com.libmedia.core.streamer.RtmpPushStreamer,InitNative()中调用了 initCapture()用于初始化接收音视频数据的两个类及initEncoder()初始化音视频编码器,当调用startPushStream开始直播推流时,经JNI方法LiveJniMediaManager.StartPush(pushUrl)开始底层编码推流。

/**

* 初始化底层采集与编码器

private boolean InitNative() {

if (!initCapture()) {

return false;

if (!initEncoder()) {

return false;

Log.d("initNative", "native init success!");

nativeInt = true;

return nativeInt;

* 开启推流

* @param pushUrl

* @return

private boolean startPushStream(String pushUrl) {

if (nativeInt) {

int ret = 0;

ret = LiveJniMediaManager.StartPush(pushUrl);

if (ret < 0) {

Log.d("initNative", "native push failed!");

return false;

return true;

return false;

以下是开启推流时的JNI层调用:

**

* 开始推流

JNIEXPORT jint JNICALL

Java_app_mobile_nativeapp_com_libmedia_core_jni_LiveJniMediaManager_StartPush(JNIEnv *env,

jclass type,

jstring url_) {

mMutex.lock();

if (videoCaptureInit && audioCaptureInit) {

startStream = true;

isClose = false;

videoCapture->StartCapture();

audioCapture->StartCapture();

const char *url = env->GetStringUTFChars(url_, 0);

rtmpStreamer = RtmpStreamer::Get();

//初始化推流器

if (rtmpStreamer->InitStreamer(url) != 0) {

LOG_D(DEBUG, "jni initStreamer success!");

mMutex.unlock();

return -1;

rtmpStreamer->SetVideoEncoder(videoEncoder);

rtmpStreamer->SetAudioEncoder(audioEncoder);

if (rtmpStreamer->StartPushStream() != 0) {

LOG_D(DEBUG, "jni push stream failed!");

videoCapture->CloseCapture();

audioCapture->CloseCapture();

rtmpStreamer->ClosePushStream();

mMutex.unlock();

return -1;

LOG_D(DEBUG, "jni push stream success!");

env->ReleaseStringUTFChars(url_, url);

mMutex.unlock();

return 0;

}AudioCapture\VideoCapture用于接收应用层传入的音视频数据及采集参数,libyuv转换的I420,LiveJniMediaManager.StartPush(pushUrl)调用后, videoCapture->StartCapture() VideoCapture就可以接收到上层传入音视频数据,

LiveJniMediaManager.EncodeH264(videoBuffer, length);

JNIEXPORT jint JNICALL

Java_app_mobile_nativeapp_com_libmedia_core_jni_LiveJniMediaManager_EncodeH264(JNIEnv *env,

jclass type,

jbyteArray videoBuffer_,

jint length) {

if (videoCaptureInit && !isClose) {

jbyte *videoSrc = env->GetByteArrayElements(videoBuffer_, 0);

uint8_t *videoDstData = (uint8_t *) malloc(length);

memcpy(videoDstData, videoSrc, length);

OriginData *videoOriginData = new OriginData();

videoOriginData->size = length;

videoOriginData->data = videoDstData;

videoCapture->PushVideoData(videoOriginData);

env->ReleaseByteArrayElements(videoBuffer_, videoSrc, 0);

return 0;

}VideoCapture接收到数据后缓存至同步队列:

/**

* 往队列中添加视频数据

int VideoCapture::PushVideoData(OriginData *originData) {

if (ExitCapture) {

return 0;

originData->pts = av_gettime();

LOG_D(DEBUG,"video capture pts :%lld",originData->pts);

videoCaputureframeQueue.push(originData);

return originData->size;

libmedia/src/main/cpp/core/VideoEncoder.cpp libmedia/src/main/cpp/core/RtmpStreamer.cpp 这两个类是核心,前者负责编码视频,后者用于Rtmp推流,从前面的JNI调用开始推流 rtmpStreamer->SetVideoEncoder(videoEncoder),可以看出来RtmpStreamer依赖VideoEncoder类,接下来说明下相互间如何完成编码及推流:

/**

* 视频编码任务

void *RtmpStreamer::PushVideoStreamTask(void *pObj) {

RtmpStreamer *rtmpStreamer = (RtmpStreamer *) pObj;

rtmpStreamer->isPushStream = true;

if (NULL == rtmpStreamer->videoEncoder) {

return 0;

VideoCapture *pVideoCapture = rtmpStreamer->videoEncoder->GetVideoCapture();

AudioCapture *pAudioCapture = rtmpStreamer->audioEncoder->GetAudioCapture();

if (NULL == pVideoCapture) {

return 0;

int64_t beginTime = av_gettime();

int64_t lastAudioPts = 0;

while (true) {

if (!rtmpStreamer->isPushStream ||

pVideoCapture->GetCaptureState()) {

break;

OriginData *pVideoData = pVideoCapture->GetVideoData();

// OriginData *pAudioData = pAudioCapture->GetAudioData();

//h264 encode

if (pVideoData != NULL && pVideoData->data) {

// if(pAudioData&&pAudioData->pts>pVideoData->pts){

// int64_t overValue=pAudioData->pts-pVideoData->pts;

// pVideoData->pts+=overValue+1000;

// LOG_D(DEBUG, "synchronized video audio pts videoPts:%lld audioPts:%lld", pVideoData->pts,pAudioData->pts);

// }

pVideoData->pts = pVideoData->pts - beginTime;

LOG_D(DEBUG, "before video encode pts:%lld", pVideoData->pts);

rtmpStreamer->videoEncoder->EncodeH264(&pVideoData);

LOG_D(DEBUG, "after video encode pts:%lld", pVideoData->avPacket->pts);

if (pVideoData != NULL && pVideoData->avPacket->size > 0) {

rtmpStreamer->SendFrame(pVideoData, rtmpStreamer->videoStreamIndex);

return 0;

int RtmpStreamer::StartPushStream() {

videoStreamIndex = AddStream(videoEncoder->videoCodecContext);

audioStreamIndex = AddStream(audioEncoder->audioCodecContext);

pthread_create(&t3, NULL, RtmpStreamer::WriteHead, this);

pthread_join(t3, NULL);

VideoCapture *pVideoCapture = videoEncoder->GetVideoCapture();

AudioCapture *pAudioCapture = audioEncoder->GetAudioCapture();

pVideoCapture->videoCaputureframeQueue.clear();

pAudioCapture->audioCaputureframeQueue.clear();

if(writeHeadFinish) {

pthread_create(&t1, NULL, RtmpStreamer::PushAudioStreamTask, this);

pthread_create(&t2, NULL, RtmpStreamer::PushVideoStreamTask, this);

}else{

return -1;

// pthread_create(&t2, NULL, RtmpStreamer::PushStreamTask, this);

// pthread_create(&t2, NULL, RtmpStreamer::PushStreamTask, this);

return 0;

}rtmpStreamer->StartPushStream()调用了,RtmpStreamer::StartPushStream(); 在RtmpStreamer::StartPushStream()中,开起新的线程:

pthread_create(&t1, NULL, RtmpStreamer::PushAudioStreamTask, this);

pthread_create(&t2, NULL, RtmpStreamer::PushVideoStreamTask, this);

在PushVideoStreamTask主要有以下调用:

- 从VideoCapture队列中获取缓存的数据pVideoCapture->GetVideoData().

- 计算PTS:pVideoData->pts = pVideoData->pts - beginTime.

- 编码器完成编码:rtmpStreamer->videoEncoder->EncodeH264(&pVideoData).

- rtmpStreamer->SendFrame(pVideoData, rtmpStreamer->videoStreamIndex) 完成推流.

这样就完成了编码与推流的整个流程,那么是如何完成编码的? 因为在开启推流之前,就已经初始化了编码器,所以RtmpStreamer只需要调用VideoEncoder编码,其实VideoCapture,RtmpStreamer二者就是生产者与消费者的模式。 VideoEncoder::EncodeH264();正是完成了推流前的重要部分-视频编码。

int VideoEncoder::EncodeH264(OriginData **originData) {

av_image_fill_arrays(outputYUVFrame->data,

outputYUVFrame->linesize, (*originData)->data,

AV_PIX_FMT_YUV420P, videoCodecContext->width,

videoCodecContext->height, 1);

outputYUVFrame->pts = (*originData)->pts;

int ret = 0;

ret = avcodec_send_frame(videoCodecContext, outputYUVFrame);

if (ret != 0) {

#ifdef SHOW_DEBUG_INFO

LOG_D(DEBUG, "avcodec video send frame failed");

#endif

av_packet_unref(&videoPacket);

ret = avcodec_receive_packet(videoCodecContext, &videoPacket);

if (ret != 0) {

#ifdef SHOW_DEBUG_INFO

LOG_D(DEBUG, "avcodec video recieve packet failed");

#endif

(*originData)->Drop();

(*originData)->avPacket = &videoPacket;

#ifdef SHOW_DEBUG_INFO

LOG_D(DEBUG, "encode video packet size:%d pts:%lld", (*originData)->avPacket->size,

(*originData)->avPacket->pts);

LOG_D(DEBUG, "Video frame encode success!");

#endif

(*originData)->avPacket->size;

return videoPacket.size;

}以上就是H264编码的核心代码了,填充AVFrame,再完成编码,AVFrame data中存储的是编码前的数据,经编码后AVPacket data中存储的是压缩编码后的数据,再通过 RtmpStreamer::SendFrame()将编码后的数据发送出去。发送过程中,需要转换PTS,DTS时间基数,将本地编码器的时间基数,转换为AVStream中的时间基数。

int RtmpStreamer::SendFrame(OriginData *pData, int streamIndex) {

std::lock_guard<std::mutex> lk(mut1);

AVRational stime;

AVRational dtime;

AVPacket *packet = pData->avPacket;

packet->stream_index = streamIndex;

LOG_D(DEBUG, "write packet index:%d index:%d pts:%lld", packet->stream_index, streamIndex,

packet->pts);

//判断是音频还是视频

if (packet->stream_index == videoStreamIndex) {

stime = videoCodecContext->time_base;

dtime = videoStream->time_base;

else if (packet->stream_index == audioStreamIndex) {

stime = audioCodecContext->time_base;

dtime = audioStream->time_base;

else {

LOG_D(DEBUG, "unknow stream index");

return -1;

packet->pts = av_rescale_q(packet->pts, stime, dtime);

packet->dts = av_rescale_q(packet->dts, stime, dtime);

packet->duration = av_rescale_q(packet->duration, stime, dtime);

int ret = av_interleaved_write_frame(iAvFormatContext, packet);

if (ret == 0) {

if (streamIndex == audioStreamIndex) {

LOG_D(DEBUG, "---------->write @@@@@@@@@ frame success------->!");

} else if (streamIndex == videoStreamIndex) {

LOG_D(DEBUG, "---------->write ######### frame success------->!");

} else {